You matter and belong here.

Explore your interests, passions, and callings through activities, clubs, and more. Campus Life at King's is about meeting new people and encountering new experiences, all in a community that nurtures faith.

More in this section

Live in Residence

Live on campus and experience student life at its best. Rooms, suites, and apartment options available.

Live on campus and experience student life at its best. Rooms, suites, and apartment options available.

Life at King's

View our photo gallery to see annual and special student events.

View our photo gallery to see annual and special student events.

Get Involved

Check out co-curricular opportunities on campus. Where will you make a difference?

Check out co-curricular opportunities on campus. Where will you make a difference?

Go Eagles

Soccer, basketball, volleyball, and more—come support your team!

Soccer, basketball, volleyball, and more—come support your team!

First-Year Connect Groups

Get off to the right start. First Year Connect Groups are spaces to make new connections and friendships from the very start of your degree.

Get off to the right start. First Year Connect Groups are spaces to make new connections and friendships from the very start of your degree.

Campus Ministries

Grow in your faith through chapel, small groups, and pop-up events!

Grow in your faith through chapel, small groups, and pop-up events!

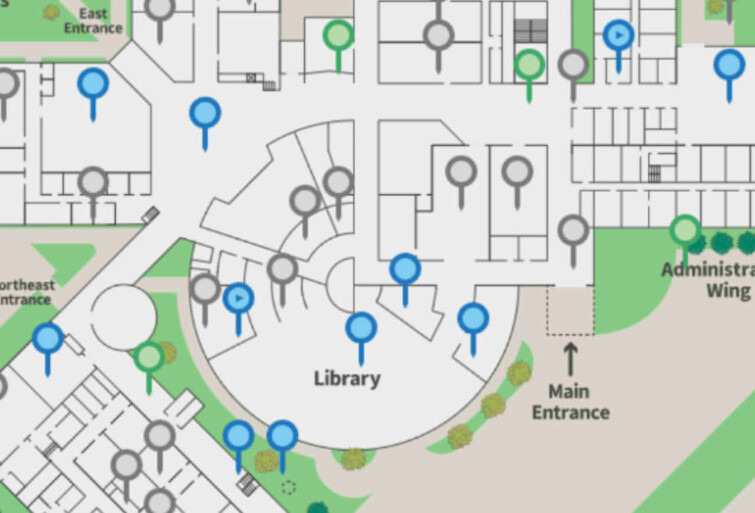

Explore Campus

Discover your future campus through our interactive map.

Discover your future campus through our interactive map.

Student's Association

Meet your reps, go to events, and get involved in student government!

Meet your reps, go to events, and get involved in student government!